Unraid as a NAS OS provides an easy way to use old hardware for some network-attached storage. However, including docker can also be a great way to get network-attached computing by running docker containers on the host. But we want to do this with something other than just the included web console; management of containers can get tedious and make each container a bit of a snowflake rather than a repeatable deployable resource that will behave the same each time it’s deployed.

In this post, you will learn how to set up and use Ansible on an Unraid host to manage resources more efficiently and apply Infrastructure as Code to your Unraid system. With Ansible, you’ll also learn how to operate and configure just containers or use docker-compose stacks for more complex container needs and use Ansible’s templating engine to the fullest.

]]>Unraid as a NAS OS provides an easy way to use old hardware for some network-attached storage. However, including docker can also be a great way to get network-attached computing by running docker containers on the host. But we want to do this with something other than just the included web console; management of containers can get tedious and make each container a bit of a snowflake rather than a repeatable deployable resource that will behave the same each time it’s deployed.

In this post, you will learn how to set up and use Ansible on an Unraid host to manage resources more efficiently and apply Infrastructure as Code to your Unraid system. With Ansible, you’ll also learn how to operate and configure just containers or use docker-compose stacks for more complex container needs and use Ansible’s templating engine to the fullest.

Unlock the Full Potential of Unraid!

Sign up for our FREE 5-week email course to learn pro tips, tricks, and best practices for optimizing your Unraid setup. Whether you're a beginner or looking to enhance your skills, each week you'll receive actionable insights right in your inbox. Don't miss out—subscribe now and master Unraid in just 5 weeks!

Prerequisites

To use Ansible on your Unraid host, you must configure some prerequisites to ensure that all of the Ansible modules will work as expected. Each of these Ansible modules may have its requirements regarding pip modules that may need to be present to operate on the target machine, your Unraid host. However, since Unraid uses an in-memory operating system if you were to install any packages via the CLI and reboot your machine, you would find that those modules would have disappeared.

To circumvent this limitation of the Unraid OS design, we can modify the GO file to install the prerequisite packages we need the host to have present to perform any other configuration with our Ansible playbook. The GO file is an executable file called during the post process to bring up all the necessary services on the Unraid OS. We can add our services and configuration to this file to customize the host’s boot behaviour. Below is a sample GO file appended with our pip installation steps.

With this file saved, whenever the host boots, it will be ready for Ansible to access. We are running from our local machine or a remote host.

When connecting to an Unraid host, you can use a host configuration that looks like this in your inventory. In the default installation of Unraid, the easiest way to access the host is to use your Unraid host’s root username and password as SSH credentials.

You can run the following command with a host file setup to test your access to your target Unraid host. For other configuration options with Ansible, refer to the following docs.

Using Ansible on Unraid

Ansible provides powerful modules to run commands and execute various tasks on a target host. With Ansible, you can automate and efficiently manage complex configurations. Unraid, on the other hand, offers an excellent graphical user interface (GUI) and a plethora of built-in utilities. However, it is just a Linux-based operating system so you can configure and interact with it like any other. Unraid offers the same flexibility and configuration capability that you would expect from a Linux-based OS. Furthermore, the GUI makes it easier to manage, allowing users to quickly and easily interact with the operating system.

With Ansible, you can then easily automate everyday tasks like:

- Reading, writing, and manipulating files on the system

- Managing user accounts and settings

- Passing commands to the system, such as restarting or suspending the system

- Installing, uninstalling, and updating software packages

- Automating configuration changes and security updates

- Automating complex tasks, such as setting up firewalls or deploying applications

However, note that this is your NAS, so be careful with some things and leave that up to Unraid to manage. It is essential to understand the mount paths. To write to your cache, consistently access it under /mnt/user/cache. Similarly, consistently access it under /mnt/user/<mnt-name> if you want to write to your array. Doing this will ensure that the features of Unraid, such as splitting of directories between disks and parity, work as expected. Follow this rule to avoid potential data loss when running a parity scan. Therefore, ensuring you access the correct mount path when writing data is essential.

Managing Unraid Containers Using Ansible

Using Ansible, you can write down the tasks you must do when interacting with the docker engine running on your Unraid system. This will allow you to start and stop containers quickly:

Through these tasks, your config will use all of Ansible’s looping and templating functionality to make your containers very configurable for the environment they are being deployed to or the configs that you have provided.

However, when deploying containers to Unraid, ensure you are using the become flag to use the root user to execute these commands. This is due to Unraid's permission structure and the root user's access to the docker engine. We have done this through the inventory entry for Unraid, so all commands will always run at root. However, if you want to be more specific, they become flags that can be used at the task level, too.

Using Docker-Compose Configuration Inline

In addition to managing individual containers with Ansible, you can use docker-compose and Ansible templating systems to achieve dynamic configurations. Not that this requires copying the docker-compose file onto the remote host. The docker-compose file is then executed on that remote host with the docker.

Using docker-compose and Ansible, you can define the docker-compose stack directly in your Ansible config, as shown below. All of the same state actions are available to control whether the stack should exist and whether it should be running.

Using Local Docker-Compose Configuration Files

You can also template the docker file onto the remote host and then have Ansible execute it for you on that remote host to bring up the docker-compose stack. In my personal experience, I have found this is an ideal way to run the containers with docker-compose, as there have been issues in the past with not all config available through inline docker-compose stacks defined under the definition key.

One last thing to note about using Docker on your Unraid box: any containers you mark to be restarted will have their state persisted between restarts of your Unraid system or the Docker engine itself. This means all of your configured Docker services can persist between restarts without rerunning your Ansible deployment.

Monitoring Containers on Unraid

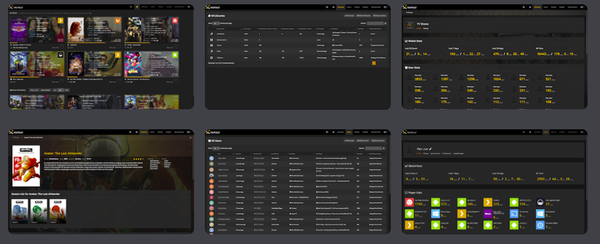

Once you have deployed containers using Ansible with either of the two options demonstrated above, you can still use the UnRaid Docker GUI to monitor the containers running on the system. This can be very useful for looking at what is running on the system at a glance, accessing the logs, or running operations like start, stop, or restart on the container.

However, remember that even though you may edit the state of a running container on UnRaid, the next time you run your Ansible playbook, the state defined there will be the source of truth and what is applied to the environment. Generally, once you start deploying your resources, like containers with code and tools like Ansible, updating your config and re-running your deployment should be easier than updating things manually via the GUI. Embrace the Infrastructure as Code so you can do more with your time!

Third-party options

You may also want to consider third-party apps for further monitoring of your containers. These can have an expanded feature set you may find useful, particularly if you’re managing many containers or containers across many machines on your network. These can include apps like Portainer, Better Stack, and Middleware, all with their own unique feature set. Evaluate if it fits the needs of your stack.

Wrapping up

You should now know better how to use Ansible to automate your Unraid systems better with Ansible. With Ansible, you can quickly write configuration files managed as code and apply them for consistent results on a single or many machines. Using Ansible modules, you can manage all components of the Unraid OS from the file system to the computer using Docker or KVM.

Once you manage your Unraid host using Ansible, check out the following post for a list of 10 apps you could easily install onto your host using Ansible with docker or how you can make use of Slackware packages to extend your system functionality.

]]>